A month ago, while building my own AI environment, I realised I was dealing with a problem that had very little to do with the technology itself. The real issue was how to design better AI decision systems around the work.

At first, I approached it like most people do. I looked at models, interfaces, workflows, prompt structures, and all the usual questions that make AI feel like an optimisation exercise. Which setup would give me the best output? Which combination of tools would make the system more useful? Which layer could speed up the work without adding friction?

Those questions were not wrong. They were simply too shallow.

The tools worked. The models were improving fast. The possibilities kept expanding. On the surface, it looked like the standard AI story: more capability, more efficiency, more leverage. Yet the more I worked with the system, the more obvious something else became. The real challenge was not getting access to AI. It was deciding how I wanted to think with it without creating more noise than value.

From Tool Setup to Decision Environment

That distinction changed the whole project.

What I thought would be a tooling setup slowly turned into a decision environment. I had to become much clearer about what kind of thinking I wanted the system to support, what kind of work I wanted to accelerate, where speed was useful, where friction was necessary, and what still required my own judgement. I was not really building an AI stack. I was designing the conditions under which AI could contribute without weakening the quality of my decisions.

That tension is now everywhere.

Why most AI conversations stay too superficial

Most conversations around AI still orbit around tools. Which model is better. Which stack scales. Which use case unlocks value faster. It sounds like the right discussion because it feels concrete. It gives people something to compare, test, and buy.

It also misses the point.

The friction I keep seeing in teams is rarely technical. It is structural. AI does not introduce a completely new category of problem. More often, it exposes the ones that were already there: vague ownership, fragmented information, unclear decision criteria, weak follow through, and workflows that rely too heavily on implicit coordination. What looked manageable before starts to crack once speed, optionality, and complexity increase. I explored a similar tension in Human Machine Culture.

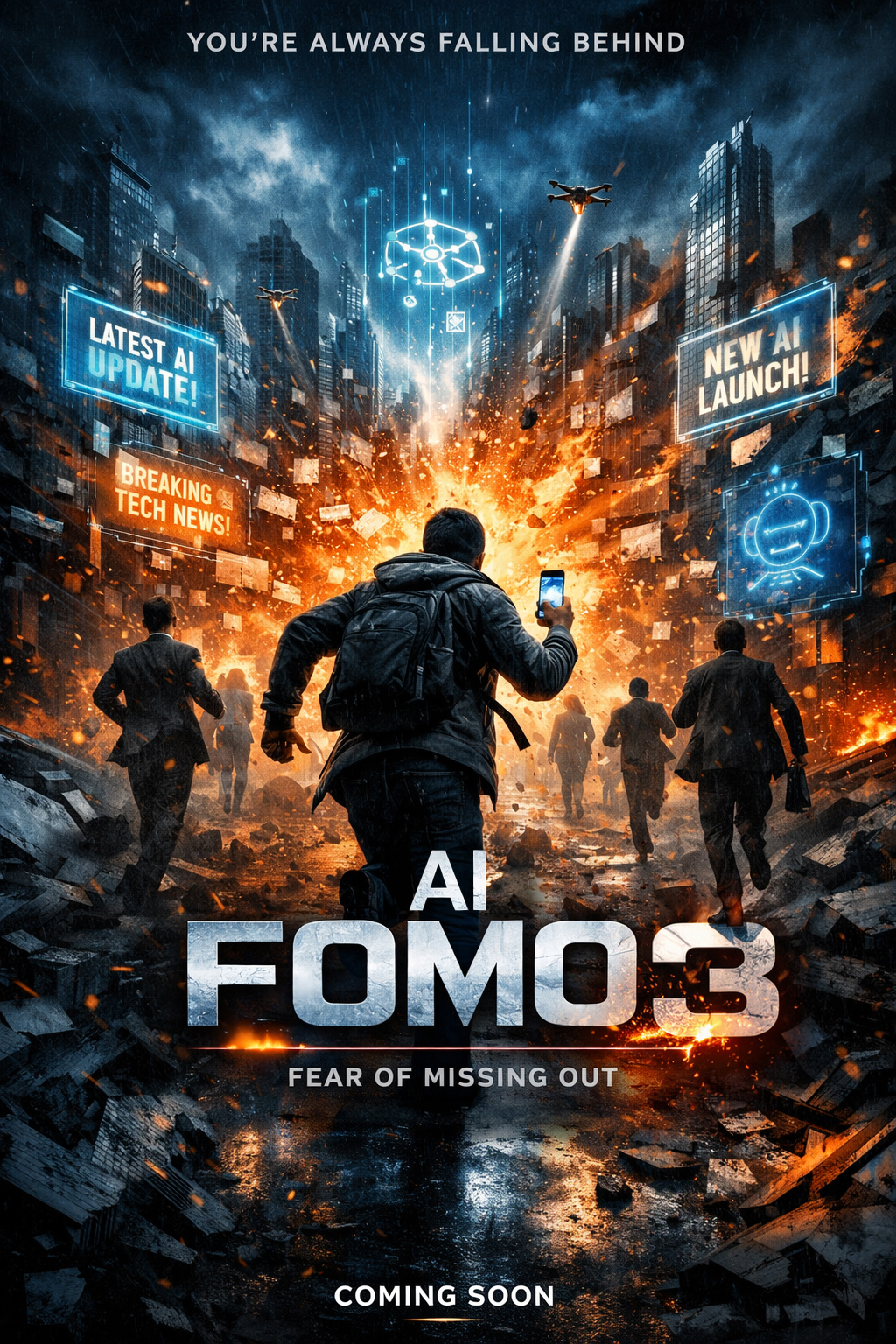

That is why so many AI conversations feel slightly anxious even when the language around them is optimistic. The anxiety is not only about missing the next model or platform. It is about sensing that the current way of thinking, deciding, and coordinating work does not scale under new conditions.

AI does not create the mess. It reveals it.

One of the easiest mistakes to make with AI is to confuse more possibility with more progress.

AI increases optionality. It gives people more routes, more drafts, more interpretations, more outputs, more ways to explore. In theory, that should be useful. In practice, if there is no structure for deciding what matters, it often produces the opposite of clarity. It produces movement without direction.

This is where AI FOMO becomes operational.

Teams start testing everything because they are afraid of missing something important. They keep experimenting because experimentation feels intelligent. They move quickly between ideas, outputs, and directions, but struggle to convert any of that into clearer decisions. The problem is not that they are doing too little. It is that the system around the work is not designed to absorb more possibility.

So activity rises. Clarity does not.

AI systems are structured. Most organisations are not

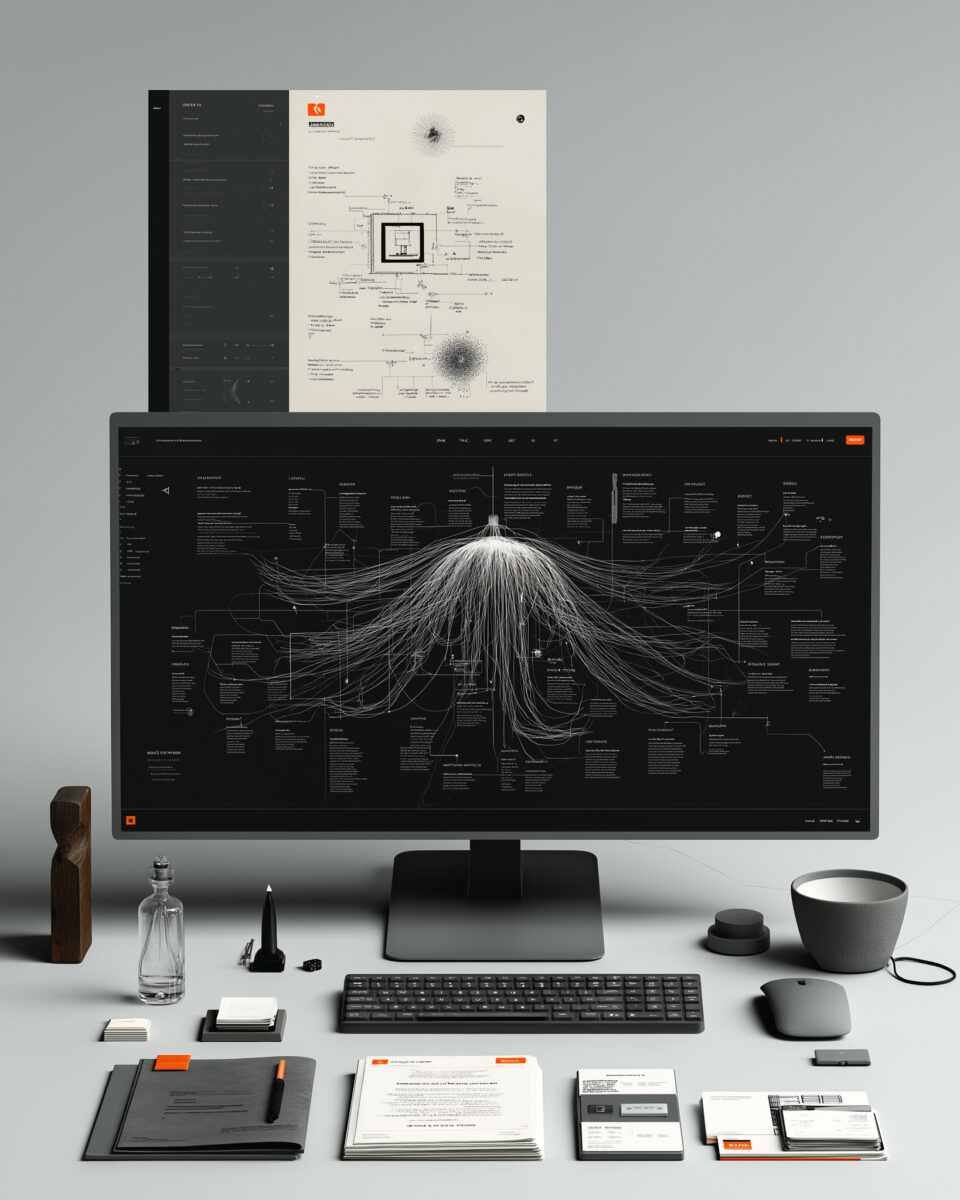

The contradiction becomes even sharper when you look at how effective AI systems actually work. They are not magical engines floating above the mess. They rely on structure. They break problems into steps. They manage context. They route decisions. They validate outputs. They operate through what are often called agentic AI systems.

Most organisations still expect decisions to emerge from a mix of meetings, scattered documents, partial context, and implied ownership. That mismatch matters. When AI enters a system where decisions are already unclear, it does not create transformation by default. It amplifies the existing environment.

If the surrounding system is coherent, AI can accelerate useful work.

If the surrounding system is messy, AI simply makes the mess more productive.

Why so many AI initiatives lose momentum

That is also why so many AI initiatives do not fail in a dramatic way. They stall.

The pattern is familiar. A team starts with energy. A pilot gets launched. Interesting outputs appear quickly. People see enough value to remain curious, but not enough structural integration to change how the work actually happens. At that point, momentum starts to leak. I wrote about a related version of this problem in Innovation fails when teams cannot think together.

Not because the model is weak. Not because the team lacks enthusiasm. Because nobody has made the next layer explicit.

What decision is this supposed to improve? What inputs count as valid? How do we judge whether the output is useful? Who owns the final call? Where does this fit in the workflow instead of sitting beside it as an experiment that everyone politely admires and nobody really adopts?

Without answers to those questions, AI remains peripheral. It becomes something teams try rather than something the organisation knows how to use.

That is not a tooling problem. It is a design problem.

The part most teams underestimate: context

AI performance depends less on abstract capability than on the quality of the context surrounding the task. What information is available. How it is structured. Whether it arrives at the right moment. Whether it is actually relevant to the decision being made. That is also why discussions around AI literacy are becoming more serious: context, role, and informed use matter more than generic awareness.

Most organisations are not operating with decision-ready context. They have data, documents, updates, dashboards, channels, and scattered expertise. What they often lack is a usable architecture for turning that material into something a team can act on with confidence.

So even when the model is strong, the surrounding conditions are weak.

That is why demos often feel more impressive than daily work. In a demo, the context is curated. In organisations, it usually is not.

What I learned building my own system

Building my own system made that painfully clear.

At the beginning, I thought I was mainly solving for productivity. I wanted a setup that could support recurring work, help me think more deeply, make use of my accumulated knowledge, and simplify parts of production. What I actually discovered was that the real work was not selecting the right tools. It was deciding what deserved support in the first place.

I had to draw lines.

Some tasks benefited from acceleration. Some needed resistance. Some were worth delegating to a system because the value came from speed or synthesis. Others needed to stay close to me because the value came from interpretation, judgement, or strategic tension. I also realised that a system can become dangerous precisely when it becomes too convenient. If it helps you produce before you have understood, it does not improve thinking. It only industrialises premature conclusions.

That was the real lesson.

AI became useful only when I stopped treating it as an add-on and started treating it as part of an architecture for thinking and deciding. Once that shift happened, the setup became more effective. Not because it was more complex, but because it was more intentional. I was no longer asking, “What can this tool do?” I was asking, “What kind of decision environment am I building here?”

The question that actually matters

To me, is where the conversation around AI needs to mature.

The real question is not, “Where can we use AI?”

That question usually produces a shopping list.

A more useful question is this: where do decisions slow down, get revisited, fragment across people, or quietly disappear? That is usually where the real leverage sits. Not because AI replaces judgement, but because it forces the organisation to clarify what judgement depends on.

Why teams rarely solve this alone

This is also the point many teams struggle to reach on their own. Not because they lack intelligence, but because they are already inside the system they are trying to diagnose. They experience the symptoms as daily friction, disconnected tools, repeated meetings, inconsistent outputs, and stalled momentum. What they often do not see clearly is the underlying decision structure producing those patterns. That is close to the argument I made in AI literacy is not a training problem.

That is why this work is rarely solved by adding another tool on top.

It requires making the invisible visible. It requires stepping back far enough to examine how decisions actually move, where context breaks, where ownership blurs, and where the team is compensating for structural weakness with extra effort. That is not a technical intervention first. It is a facilitative one. Before teams need a solution, they usually need a better way to surface the problem they are actually dealing with.

The shift is not from no AI to AI. It is from vague decisions to designed decisions

When that work is done properly, AI can be valuable. It can strengthen analysis, reduce repetition, improve synthesis, and support better timing. When that work is ignored, AI tends to produce the opposite: more content, less coherence.

So the shift is not really from no AI to AI.

It is from vague decisions to designed decisions.

That is where AI decision systems start to matter. Not as a technical layer added on top of the organisation, but as a deliberate way of shaping how information, judgement, and responsibility come together around the work.

AI as a stress test

In that sense, AI is not best understood as a capability layer. It is a stress test.

It tests whether the organisation knows how decisions are made. It tests whether context is usable. It tests whether people understand their role in the process. It tests whether collaboration is actually structured or just assumed. It tests whether the system can handle more optionality without collapsing into noise.

If those things are weak, AI exposes the weakness quickly.

If those things are strong, AI compounds them.

That is why two organisations can use similar tools and get completely different outcomes. The difference is rarely the technology alone. It is the quality of the system the technology enters.

What this should push us to examine

So no, AI FOMO is not really about missing the newest platform, model, or feature.

It is about something less comfortable.

It is about recognising that many existing ways of working were already too loose, too fragmented, or too dependent on implicit coordination. AI simply removes the illusion that this can continue without consequence.

More information will not solve that. Better models alone will not solve that either.

Only better AI decision systems will.

That is where the work becomes interesting.

Not in asking how to add AI on top, but in examining where decisions in the current setup are delayed, avoided, diluted, or repeatedly reopened because the system around them is still too vague.

Start with one recurring decision. Map who touches it, what inputs shape it, and where it repeatedly stalls. That is usually where the real problem becomes visible.

That is also usually the moment a team stops talking about AI as a tool and starts seeing it for what it really is.

A mirror held up to the way it thinks.