As AI enters everyday work, many organisations are responding in the most predictable way possible.

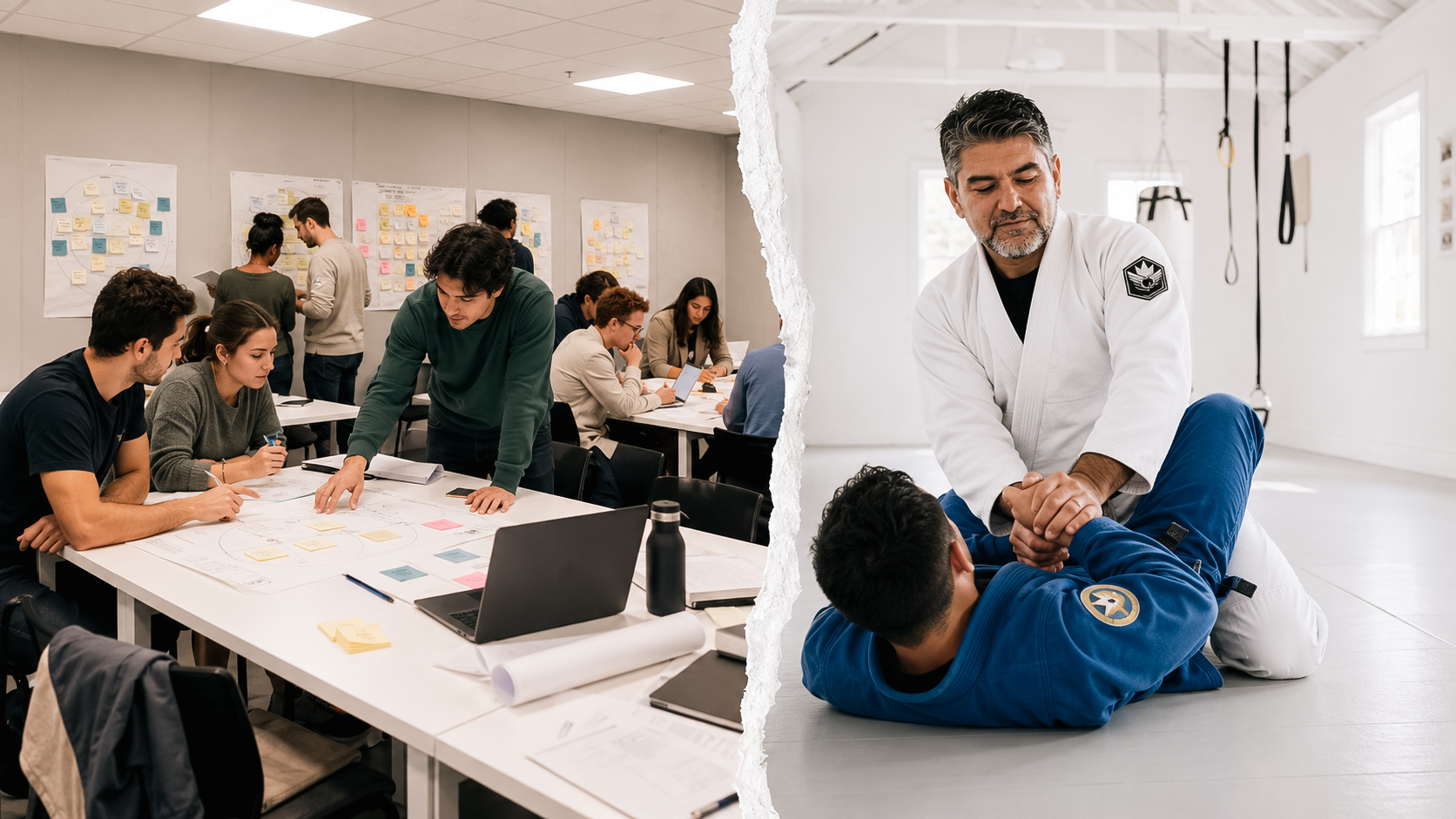

I have seen leadership teams discuss AI use as if the only question were access. In reality, the issue was who still owned judgment once the output looked credible

They schedule a training.

A few workshops. A prompt guide. Maybe a lunch session on responsible AI. Everyone leaves with a better vocabulary, a few practical tricks, and the comforting sense that progress is happening.

It is not useless. It is also not the real work.

AI literacy is quickly becoming one of those terms that sounds useful while hiding the actual problem. Under the EU AI Act, organisations using or providing AI systems are expected to ensure a sufficient level of AI literacy among the people dealing with those systems. The important point is that this is not just about generic awareness. It is about context, risk, role, and the ability to make informed use of AI.

That matters, because most teams do not struggle with AI because they lack access to tools.

They struggle because nobody has clearly designed how judgment should work once those tools are in the room.

That is not a training issue. It is a leadership design issue.

AI literacy is being misunderstood

A lot of organisations are still framing AI literacy as if it were equivalent to digital skills training. Teach people what the tool does. Show them how to write better prompts. Explain a few risks. Then move on.

The logic is simple. If people know more about AI, they will use it better.

The problem is not that they are wrong. It is that they are too narrow.

The real friction tends to appear one layer deeper. Teams are not only asking, “How do I use this tool?” They are also, often implicitly, asking:

- When is it appropriate to use AI here?

- What kind of decisions can AI support, and which ones should remain fully human?

- What level of verification is required before acting on an output?

- Who is accountable when an AI-assisted decision goes wrong?

What gets escalated, challenged, or documented?

Those are not usage questions. They are operating questions.

And operating questions are leadership questions.

The comforting fiction of AI upskilling

The problem with most AI upskilling efforts is not that they are wrong. It is that they are too narrow.

They assume better individual capability will naturally produce better organisational judgment.

It won’t, at least not on its own.

Because once AI enters workflow, the quality of outcomes depends less on whether one person can write a strong prompt and more on whether the surrounding system makes good judgment possible. If boundaries are vague, review is inconsistent, and accountability is diffused, even capable teams will drift into poor decisions.

This is where many organisations get caught.

In practice, people learn just enough to start using AI more often, but not enough clarity exists around boundaries, oversight, or decision quality. The result is not mature adoption. It is distributed ambiguity.

Some teams become overly cautious and avoid using AI even where it could help. Others use it too casually, assuming speed is proof of value. A few enthusiastic people start shaping informal norms for everyone else. Leadership, meanwhile, often believes the problem is being handled because training has been delivered.

It hasn’t. It has merely been delegated.

Literacy without structure creates false confidence

This is the more dangerous effect.

When people are partially trained but structurally unsupported, confidence can increase faster than judgment.

That is when teams begin using AI in ways that feel reasonable but remain poorly governed. A draft becomes a recommendation. A summary becomes an interpretation. A convenience becomes an influence on decision quality.

This is why the AI literacy requirement matters more than it first appears. It is not asking whether people have heard about AI. It is forcing organisations to think about who is using these systems, in what context, with what degree of risk, and with what capability to exercise informed judgment.

So the better question is not: Have we trained people on AI?

It is: Have we designed the conditions under which people can use AI with sound judgment?

AI literacy is really about decision architecture

Once you look at it properly, AI literacy sits inside a wider system of leadership choices.

It is shaped by decision rights, review mechanisms, escalation rules, and the level of human oversight built into work. If those elements are weak, no amount of training will solve the underlying problem.

This is where leadership needs a clearer decision system. In my work, I often use frameworks like The CLEAR Filter to help teams evaluate whether a tool deserves a place in their workflow.

A team does not become AI-literate because it knows the language of AI.

A team becomes AI-literate when it knows how to act responsibly, critically, and consistently in real situations.

That means leaders need to define more than capability. They need to define the environment in which capability is exercised.

For example:

- Which tasks are acceptable to augment with AI?

- Which outputs require human review before use?

- Where is traceability necessary?

- What kinds of errors are tolerable, and which are unacceptable?

When should speed be prioritised, and when should caution override efficiency?

Most organisations skip this because it is harder to package than training.

Unfortunately, it is also the part that determines whether AI improves decision-making or quietly degrades it.

A concrete example: when AI enters hiring before leadership is ready

Imagine a leadership team in a growing SME.

The HR lead starts using AI to help draft job descriptions. Then someone in operations uses it to summarise interview notes. A manager asks whether AI could help shortlist candidates faster. None of this feels dramatic. In fact, it looks efficient.

So the team moves forward.

At first, the gains seem obvious. Less time spent writing. Faster comparisons. Cleaner summaries. A more streamlined process.

Then the real questions begin.

- Who checks whether the AI summary has flattened important nuances in a candidate’s profile?

- Can AI be used only for admin support, or also to influence selection decisions?

- If two candidates are ranked differently because one manager relied more heavily on AI-generated interpretation, is that still a human decision?

- Who is accountable if bias enters the process through convenience rather than intent?

This is where the illusion of “AI literacy as training” starts to crack.

At this point, the issue is no longer whether people know how to use AI. The issue is whether the leadership team has designed clear decision boundaries around its use.

Without that structure, the process quietly shifts. Human judgment becomes less visible. Responsibility becomes harder to trace. Speed increases, but clarity decreases. The issue is not only whether people have access to AI. It is whether the organisation has built the kind of adaptive culture that allows people to question, verify, and adjust before weak decisions become normal.

As a result, that is the real risk.

AI does not need to be making the final hiring decision to reshape the quality of the decision. It only needs to start influencing what gets noticed, what gets simplified, and what gets trusted without challenge.

This is why AI literacy cannot stop at skills. It must include judgment, oversight, and explicit design choices around where AI supports work and where human responsibility remains non-negotiable.

The leadership task is not to know everything about AI

This is where the conversation often becomes distorted.

Leaders sometimes assume they need to become technical experts before they can govern AI well. Usually that is the wrong frame.

In most organisations, the core challenge is not technical mastery. It is structural clarity.

Leaders need to understand enough to ask better questions, define appropriate boundaries, and ensure that responsibility does not disappear into the machine.

That is a different standard.

It means designing a system where teams know:

- what AI is being used for

- what good use looks like

- what risks matter in their context

- when human judgment must intervene

how accountability remains visible

This is why the phrase AI literacy can be misleading.

It sounds educational.

But in practice, it is organisational.

What organisations should design now

If AI is already showing up in meetings, marketing, operations, strategy work, research, hiring, or internal communication, then the issue is already live.

Not in theory. In workflow.

That means organisations should stop treating AI literacy as a side initiative and start addressing it as part of leadership design. The starting point is not another awareness session. It is a set of design decisions:

Define where AI can and cannot support work.

Do not leave this to personal interpretation.Clarify the human standard for review.

What must be checked before action is taken?Match literacy to role and risk.

A generic approach sounds efficient, but it usually ignores where actual exposure sits. Different roles require different levels of judgment.Build escalation paths.

If a team sees a risk, ambiguity, or misuse, where does it go?Treat judgment as a design priority.

The point is not just to help people use AI faster. It is to help them use it without weakening the quality of decisions.

AI maturity is judgment by design

If this tension already feels familiar inside your team, the next step is not another AI training session.

It is a conversation about how decisions are actually being made, where judgment is becoming blurred, and what leadership needs to redesign before AI habits harden into culture.

Most organisations also lack clear escalation paths. When something feels off, teams often do not know where it goes or who owns the final call. That is where decision quality quietly breaks down.

Good oversight also depends on psychological safety, because people need to feel able to challenge weak outputs before they shape important decisions.

This is the work. Not more awareness. Not better prompts.

Designing how decisions hold under pressure. That is where AI literacy becomes real.

FAQ

What is AI literacy in an organisation?

AI literacy in an organisation is not only the ability to use AI tools. It also includes understanding where AI can support work, what risks matter in context, when human review is required, and how accountability remains visible in decision-making.

Why is AI literacy a leadership issue?

AI literacy becomes a leadership issue when AI starts influencing everyday decisions. Leaders need to define boundaries, review standards, escalation paths, and the role of human judgment so that speed does not reduce decision quality.

How can leadership teams improve AI literacy?

Leadership teams improve AI literacy by going beyond tool training. They need to design clear decision rights, define where AI can and cannot be used, match oversight to risk, and create conditions where people can question outputs before acting on them.